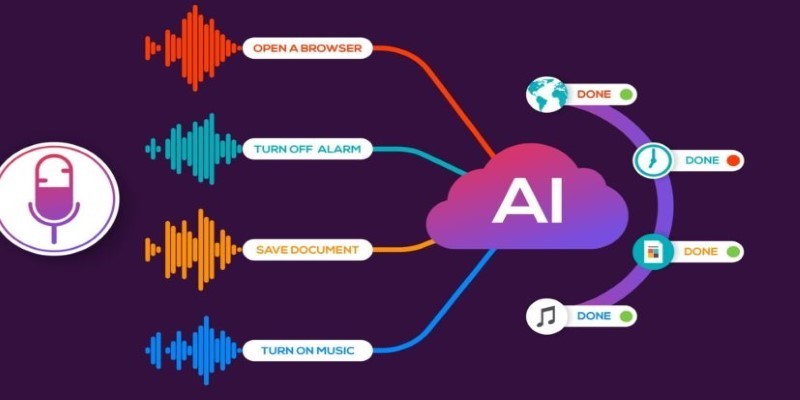

Understanding speech feels effortless in casual conversation, but it's a much bigger challenge for machines. Speech recognition powered by AI doesn't just "listen"—it deciphers and interprets spoken words, adapting to various accents, speeds, noise, and speaking styles. This process drives virtual assistants, transcription tools, and voice-controlled systems.

The journey from raw sound to machine comprehension involves complex steps that combine sound science, deep data, and advanced models. By breaking down these layers, AI systems can transform human speech into meaningful, actionable information in ways that continue to improve over time.

When you speak into a device, the first thing that happens is signal conversion. The microphone captures analog sound waves and converts them into digital signals—essentially, a stream of numbers. This raw digital audio is the input for the speech recognition process. But before anything can be understood, the system needs to clean the data. It filters out background noise, adjusts for volume inconsistencies, and segments the stream into manageable slices.

From there, things get into feature extraction. Imagine handing the machine a magnifying glass to zoom in on patterns in your voice. These patterns aren't word-based—they include tone, pitch, and frequency. The machine isn't "hearing" like humans do. It's instead looking at these features mathematically to determine which sounds you uttered.

Phoneme recognition is the process where AI identifies the smallest sound units that distinguish words, like the difference between "bat" and "pat." The system compares extracted features to its phoneme database. This is challenging because English has around 44 phonemes, and their pronunciation varies based on factors like region, background, and emotion, making accurate recognition tricky.

AI enhances speech recognition by using statistical models like Hidden Markov Models (HMM) or neural networks. These models don’t rely on exact matches alone; they predict possibilities based on context. For example, if the system hears “I scream,” it analyzes surrounding words, sentence structure, and common usage patterns to determine whether you meant “ice cream” instead.

Once the system identifies the phonemes and forms them into words, it still doesn’t understand them. That’s where natural language processing comes in. NLP is the branch of AI responsible for making sense of human language, not just translating it from sound to text.

NLP algorithms parse the recognized words into a structured form that machines can work with. This involves understanding grammar, syntax, and semantics. For example, when you say, "Book a flight to Cairo," the system must know that "book" is a verb here, not a noun. It must detect intent, assign meaning, and relate the phrase to specific commands or actions.

This interpretation layer allows speech recognition tools to work in real-life applications. If the system gets the words right but the meaning wrong, the entire experience breaks. That’s why modern voice assistants integrate NLP deeply with speech recognition—so they not only transcribe what you said but also understand what you meant.

NLP also enables continuous learning. The more you use a voice-based system, the more it adapts to your speaking style, preferences, and vocabulary. Over time, your virtual assistant becomes more personalized—not just in voice detection but also in comprehension. This adaptability is one of the most critical benefits AI brings to speech recognition.

Despite the advances in speech recognition technology, several challenges persist. One of the biggest hurdles is accent variation. For example, a person from Texas may sound vastly different from someone in Mumbai or London. While humans can often understand each other with patience, machines rely on pre-trained models that might not be exposed to every accent, leading to recognition errors. AI developers address this by training models on diverse datasets, but underrepresented accents still struggle with accuracy.

Emotion in speech also poses a challenge. A word spoken with different emotions, like a cheerful or annoyed “yes,” can sound very different. Since emotions influence pitch, speed, and tone, AI may struggle with phoneme detection. While advanced systems incorporate emotion analysis and affective computing, the field is still developing.

Noise is another issue. In noisy environments like busy streets or cars, speech recognition systems often struggle to isolate the speaker’s voice from the background sounds. To address this, technologies like beamforming microphones and noise-canceling filters are used, but achieving reliable performance in chaotic settings remains an ongoing challenge for AI systems.

Training AI to recognize speech is an ongoing, iterative process that requires vast amounts of data and continuous refinement. AI models are fed thousands of hours of recorded speech, paired with accurate transcriptions, to expose them to a variety of languages, accents, genders, and age groups. This ensures the model learns to handle diverse speech patterns.

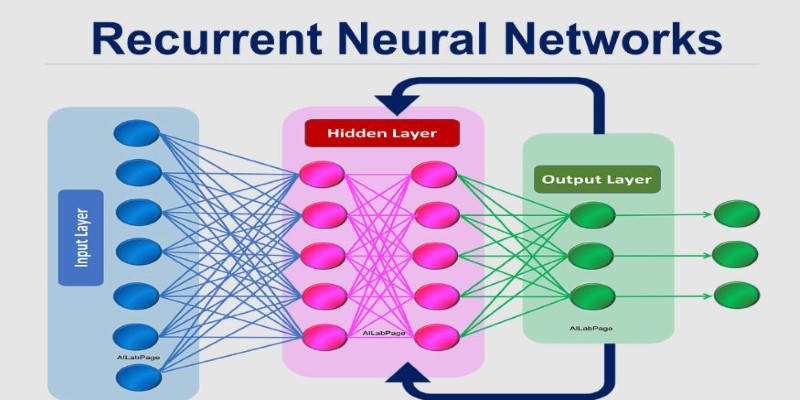

Supervised learning is key, where humans annotate speech data—correcting errors and flagging misinterpretations. These adjustments help the model improve over time. Deep learning models, such as recurrent neural networks (RNNs) and transformers, are commonly used in modern speech recognition. RNNs excel at understanding sequences, making them ideal for processing speech, as they remember the context of previous words. Transformers, like those behind GPT models, can analyze long stretches of text at once, aiding in understanding complex speech.

A significant advancement in recent years is the shift toward end-to-end models. These models map audio input directly to text, bypassing traditional layers, resulting in faster and more accurate recognition, particularly when paired with cloud computing and real-time processing.

As datasets grow and models evolve, AI's understanding of speech continues to improve, inching closer to achieving—or even surpassing—human-level comprehension.

Speech recognition powered by AI has significantly advanced in recent years, enabling machines to understand human speech with increasing accuracy. By combining signal processing, natural language processing, and deep learning, AI systems can interpret spoken language in real time. Although challenges like accents, noise, and emotions remain, the progress continues. As AI models evolve, the gap between machine recognition and human understanding will continue to close, making voice-driven technologies more intuitive and accessible in everyday life.

How AWS Braket makes quantum computing accessible through the cloud. This detailed guide explains how the platform works, its benefits, and how it helps users experiment with real quantum hardware and simulators

Boost your Amazon sales by optimizing your Amazon product images using ChatGPT. Learn how to craft image strategies that convert with clarity and purpose

How the AI Hotel Planned for Las Vegas at CES 2025 is set to transform travel. Explore how artificial intelligence in hospitality creates seamless, personalized stays for modern visitors

Generate your OpenAI API key, add credits, and unlock access to powerful AI tools for your apps and projects today.

Speech recognition uses artificial intelligence to convert spoken words into digital meaning. This guide explains how speech recognition works and how AI interprets human speech with accuracy

Understand how transformers and attention mechanisms power today’s AI. Learn how self-attention and transformer architecture are shaping large language models

AI in insurance is transforming the industry with smarter risk assessment and faster claims processing. Discover how technology is improving accuracy, reducing fraud, and enhancing customer experience

AI changes the workplace and represents unique possibilities and problems. Find out how it affects ethics and employment

To decide which of the shelf and custom-built machine learning models best fit your company, weigh their advantages and drawbacks

Master MLOps to streamline your AI projects. This guide explains how MLOps helps in managing AI lifecycle effectively, from model development to deployment and monitoring

Learn how to lock Excel cells, protect formulas, and control access to ensure your data stays accurate and secure.

Explore the role of probability in AI and how it enables intelligent decision-making in uncertain environments. Learn how probabilistic models drive core AI functions